Load Balancer

Load Balancer

Dynamically distribute your traffic to optimise your application’s scalability.

OVHcloud Load Balancer

Dynamically distribute traffic to optimise your application’s scalability

OVHcloud Load Balancer makes it easier to maintain the scalability, high availability, and resilience of your applications. This is achieved by dynamically distributing traffic load across multiple instances and regions. Improve user experience by automating traffic management and load handling, while keeping costs in check. By combining Load Balancer and Floating IP, you can create a secure single-entry point to your application, while also enabling failover scenarios and protecting your private resources.

A secure and highly available cloud Load Balancer

The Load Balancer dynamically distributes traffic to manage peak loads with ease. Its distributed infrastructure and wide range of security features make it an essential tool for organisations seeking to increase the reliability and performance of their applications, websites, or databases.

Benefits

Deployable in Regions

Build your infrastructure strategically – take a geographical approach to create and deploy the cloud Load Balancer service closer to your customers.

Connected to private networks

When paired with the OVHcloud vRack, the Load Balancer can serve as a gateway between public and private networks, isolating your application nodes on the private network.

Simplified management

Manage your Load Balancer using the tool that best fits your needs: OpenStack Horizon UI, or API.

Private workloads

Configure the Load Balancer for private use, making it accessible only within your private network with backend instances.

Integrated with the Public Cloud ecosystem

Deploy and manage your cloud Load Balancer directly from your Public Cloud environment, thanks to Octavia API support and other compatible tools (Terraform, Ansible, Salt, etc.)

Multiple health check protocols

Set the criteria that determine when an instance or node should be excluded. You can choose from a range of options available in the official OpenStack Load Balancer documentation, including standard TCP verification, HTTP code and many others.

SSL/TLS encryption

Load Balancer supports SSL/TLS encryption to ensure data confidentiality. You can quickly create your Let's Encrypt DV SSL certificates, included at no additional charge with any cloud Load Balancer service plan. You can also upload your certificate if you work with a specific certificate authority (CA).

Compatible with Public Cloud instances

The Load Balancer can manage several node types, such as standard OpenStack instances and Kubernetes containers. With the private network, you can leverage Hosted Private Cloud virtual machines and Bare Metal servers as a backend.

Key features

Built for high availability

Load Balancer is built on a distributed architecture, and is backed by a 99.99% SLA. It uses its health check feature to distribute load across available instances.

Designed for automated deployment

Choose the cloud load balancer size that fits your needs. Configure and automate with OpenStack API, UI, CLI, or OVHcloud API. Load Balancer deployment can be automated using Terraform to extensively distribute traffic loads.

Built-in security

To ensure data security and privacy, the Load Balancer comes with free HTTPS termination, and benefits from our Anti-DDoS infrastructure, a real-time protection against network attacks.

Discover the Load Balancer range

The following table provides informational values to help you choose the plan that best meets your needs.

| Applicable to the following listeners: | |||||||

| All | HTTP/TCP/HTTPS* | HTTP/ HTTPS | TERMINATED_HTTPS* | UDP | |||

| Load Balancer Size | Bandwidth | Concurrent active session | Session created per second | Requests per second | SSL/TLS session created per second | Requests per second | Packet per second |

|---|---|---|---|---|---|---|---|

| Size S | 200 Mbs (up/down) | 10k | 8k | 10k | 250 | 5k | 10k |

| Size M | 500 Mbs (up/down) | 20k | 10k | 20k | 500 | 10k | 20k |

| Size L | 2 Gbps (up/down) | 20k | 10k | 40k | 1,000 | 20k | 40k |

| Size XL | 4 Gbps (up/down) | 20k | 10k | 80k | 2,000 | 40k | 80k |

*HTTPS listener is passthrough meaning that is the SSL/TLS termination is managed by the load balancer members. On contrary, the TERMINATED_HTTPS listener is managing the SSL/TLS termination.

Manage high volumes of traffic and seasonal activity

With Load Balancer, you can manage traffic growth and decline by seamlessly adding and removing instances, such as pool members, to your configuration in just a few clicks or via an API call.

Optimise instances' performance with HTTPS encryption offloading

With HTTPS termination, you can shift TLS/SSL encryption and decryption tasks to the Load Balancer. As a result, your instances benefit from offloading and speed up your application logic.

Blue/green or canary deployment

With Load Balancer, you can switch between blue/green environments quickly and with agility, because rollback is easy. Leverage the power of L7 policies and weighted routing to implement canary deployments seamlessly, enhancing your application’s performance and delivering an optimal user experience.

Load Balancer scenarios

The Load Balancer is compatible with three main architecture types. Depending on the scenario, the architecture may or may not include Floating IP and Gateway.

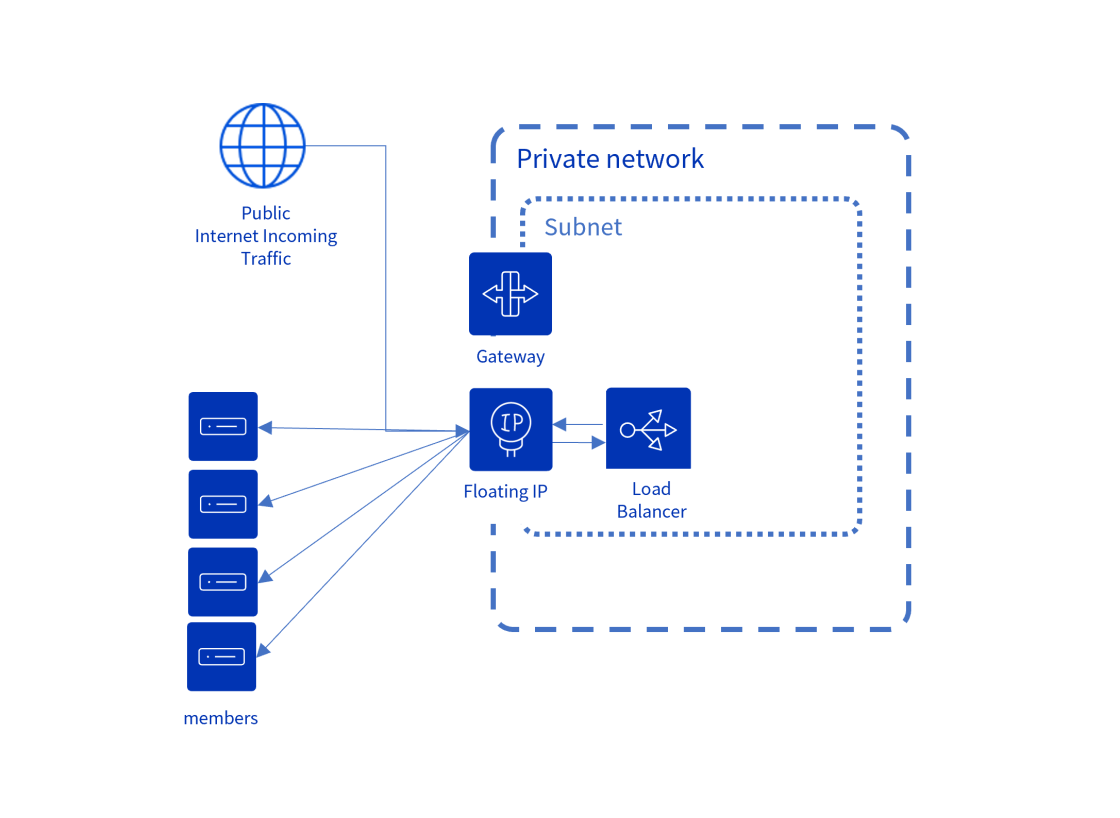

Public to Private

Incoming traffic from the internet is directed to the Floating IP connected to the Load Balancer. The instances behind the Load Balancer are on a private network and have no public IP, keeping them completely private and isolated from the internet.

Public to Public

Incoming traffic from the internet arrives at a Floating IP linked to the Load Balancer. The Load Balancer routes the traffic to instances through a public IP. Thus, the Load Balancer uses the Floating IP for egress to reach these instances.

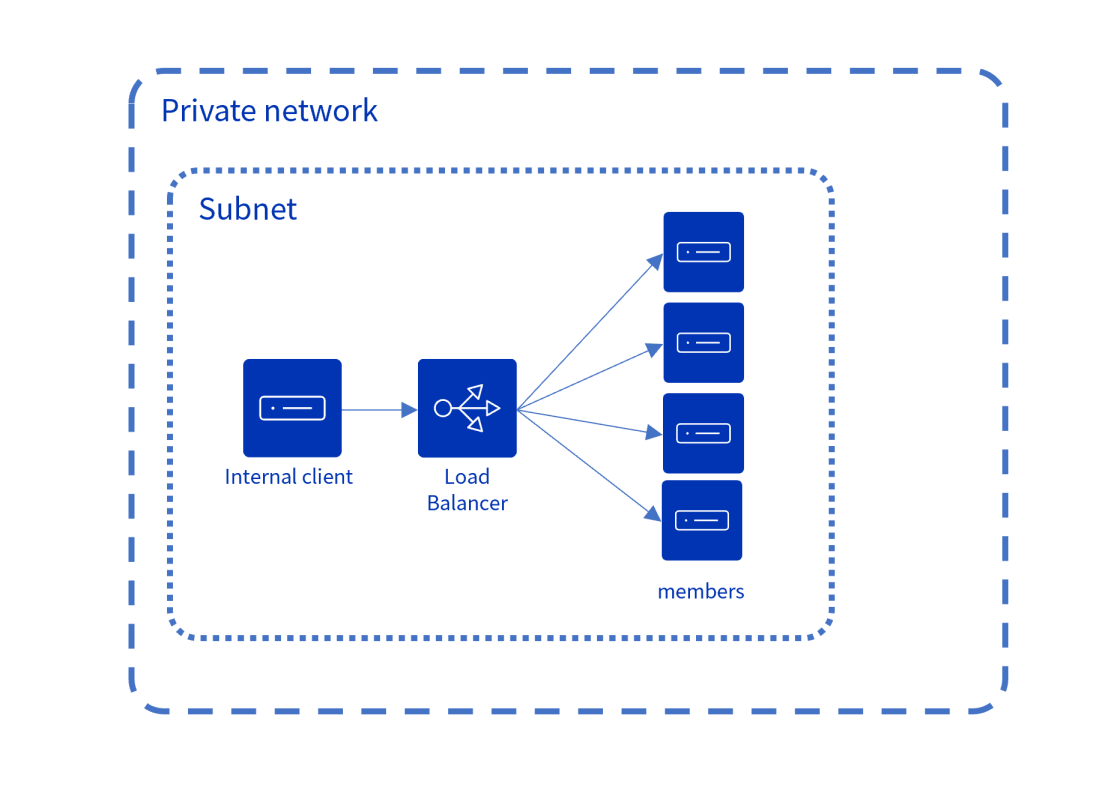

Private to Private

Incoming traffic from a private network is routed to instances that can be accessed through this network. In this case, Floating IP or Gateway are not required.

Related products

Guides

Concepts – Public Cloud Networking

Understand concepts of Public Cloud networking.

Getting started with Load Balancer on Public Cloud

Discover how to launch a Load Balancer on Public Cloud.

Deploying a Public Cloud Load Balancer

Find out how to configure the Public Cloud Load Balancer.

Configuring a secure Load Balancer with Let's Encrypt

Discover how to configure a secure Public Cloud Load Balancer with Let's Encrypt.

Integrated Identity, Data Security and Observability Products

FAQ

What is a cloud load balancer?

The cloud load balancer functions similarly to a traditional load balancer, but it is hosted and managed in the cloud. To prevent server overload, it automatically distributes traffic across multiple cloud servers.

What are the advantages of a cloud load balancer?

A cloud load balancer allows you to efficiently manage traffic for your cloud applications, ensuring high availability, scalability, security, flexible billing (pay as you go), and easy API-based management.

What is the difference between Load Balancer for Kubernetes and the standard OVHcloud Load Balancer?

Load Balancer for Kubernetes is designed to be used with our Managed Kubernetes solution. It provides an interface that is compatible with Kubernetes, which means you can easily manage your Kubernetes Load Balancer using native tools.

Load Balancer, on the other hand, is specifically designed to be used with our cloud solutions. It is based on OpenStack Octavia and leverages OpenStack API, enabling automation via tools such as Terraform, Ansible, or Salt.

What is the difference between a cloud load balancer and a cloud CDN?

A cloud load balancer distributes application or website traffic across multiple cloud servers. This improves availability, reliability, and performance by preventing server overload, and allows for scalability as demand increases. While cloud load balancers can operate at various layers of the OSI model, they commonly operate on Layer 4 (transport) and Layer 7 (application).

A Cloud Content Delivery Network (CDN) is a cloud network of distributed servers that delivers web content and services closer to the user. CDN caches static content like HTML pages and images in multiple ‘edge locations’ around the world. This speeds up content delivery, reduces latency, and shortens loading times.

What is Layer-7 HTTP(S) load balancing?

Layer-7 HTTP(S) load balancing operates at the highest level of the Open Systems Interconnection (OSI) model. It distributes incoming web traffic across multiple servers based on the content of the HTTP/HTTPS request. This makes it more sophisticated than lower-level load balancers, as it understands the content of the data being transferred.

Why is my Load Balancer spawned by region?

OpenStack regions determine the availability of Public Cloud solutions like the Load Balancer. Each region has its own OpenStack platform, offering a set of computing, storage and network resources. Find out more about regional availability here.

What protocols can I use with my Load Balancer?

The supported protocols for Load Balancer are TCP, HTTP, HTTPS, TERMINATED_HTTPS, UDP, SCTP and HTTP/2.

How does the Load Balancer determine if hosts are healthy or not?

Load Balancer uses a health monitor to check if backend services are alive. You can configure several protocols for that purpose, including HTTP, TLS, TCP, UDP, SCTP and PING.

I have my own SSL certificate - can I use it?

Sure! You can choose between the OVHcloud Control Panel or OVHcloud API to upload your own SSL certificate, depending on whether you want to do it manually or automate the process.

I don’t know how to generate an SSL certificate; how can I use HTTPS LBaaS?

That’s okay! With the OVHcloud Control Panel, you can effortlessly generate and use your own Let’s Encrypt SSL DV certificate with the Load Balancer, which makes deployment simpler. The Let’s Encrypt SSL DV certificate is included with the Load Balancer at no additional charge.